– Gareth Shaw, MD Pera Prometheus

Artificial Intelligence (AI) is no longer just a research topic for the UK Ministry of Defence (MOD). It is beginning to be embedded into live programmes that analyse intelligence at scale, optimise logistics, and support complex decision-making. The Defence Artificial Intelligence Strategy (2022) sets the ambitions to leverage AI for operational advantage, while ensuring it is used responsibly and securely. Industry partners supporting MOD on AI projects or supporting them towards autonomy must deliver AI that is dependable, ethical, and above all secure against adversarial threats. AI is exciting but it also introduces new attack surfaces, governance headaches, and ethical dilemmas.

As a security consultant, I’ve seen how industry partners are grappling with this shift. I am by-no-means an AI expert but I understand security and its core principles. The good news is that the UK has built a useful framework of policies and guidance on AI; the bad news, in my opinion, is that it is still fragmented, inconsistently applied, and leaves organisations to do a lot of interpretation themselves. In this blog I have endeavoured to express my opinion on the current situation surrounding AI guidance and framework.

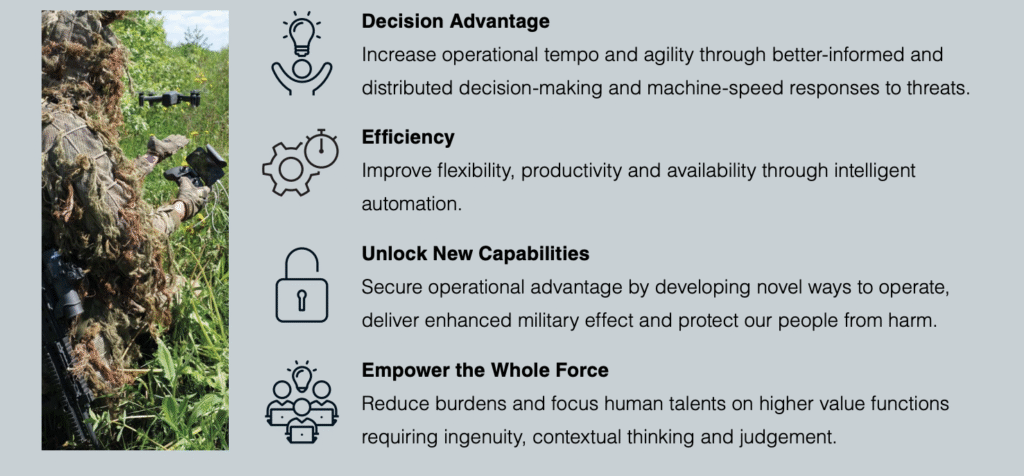

Figure – Priority Outcome Through AI (Extract from The Defence AI Strategy 2022)

Current Guidance and Frameworks

- At the heart of defence policy is JSP 936: Dependable AI in Defence (2024). It is the MOD’s directive for AI governance, safety and assurance across the lifecycle, intended to bridge high-level ethics and practical implementation as Defence becomes “AI-ready.” For industry partners, JSP 936 is the closest thing to a single source of policy truth, but it remains a directive rather than a certifiable scheme you can “pass,” which matters when planning evidence and audits.

- The Defence AI Strategy (2022) provides the strategic ambitions such as exploiting AI at pace for operational advantage while upholding trust, ethics and UK values. It explains intent and sets expectations for collaboration with industry, but deliberately stops short of providing detailed controls leaving industry partners to connect the dots with other documents and interpret the security and assurance choices.

- The AI Playbook (2025), developed for the government, offers advice on how to adopt AI safely and effectively. Defence teams are increasingly using it as a reference for governance processes such as risk assessments, human oversight and impact considerations. It complements JSP 936 without replacing defence specific duties.

- For technical security, the most actionable baseline comes from the National Cyber Security Centre (NCSC). Their Guidelines for Secure AI System Development and Machine Learning Security Principles cover secure design, deployment, and common threats like adversarial manipulation, data poisoning, and model theft. These are widely treated as the reference controls that MOD teams expect to see reflected in supplier assurance plans.

- Another document is the MOD’s Industry Security Notices (ISNs). ISNs are published regularly to update suppliers on security requirements, covering topics such as cloud, handling classified data, and DEFCON (Defence Condition) processes. As at the date of this blog publication, an ISN relating to AI has not been released.

- There is also a wider assurance ecosystem. DSIT’s Introduction to AI Assurance gives organisations methods for evidencing safety and performance, and the Alan Turing Institute has produced a framework for assuring third-party AI systems in UK national security. These help suppliers and customers align on how to assess risks before deployment. These are also increasingly relevant to defence procurements.

- International standards add another layer. ISO/IEC 42001:2023 is the world’s first AI management system standard, allowing organisations to be certified for AI governance and risk management. Although not Defence-specific, it is becoming a useful way for suppliers to demonstrate maturity.

Key Security Concerns for Industry Partners

The security concerns for suppliers working with MOD on AI projects fall into seven key areas:

- Mission-ready assurance – AI in defence must work safely in the real world, not just in a controlled lab. That means proving it can survive deliberate attacks, such as feeding it false data, tricking it with sneaky inputs/prompts, or trying to steal the model. The NCSC has explained what these threats look like. Industry partners must show how they will deal with them: by running “red team” attack tests, monitoring system behaviour, having the ability to roll back changes quickly, and preparing safety cases in line with JSP 936.

- Supply chain integrity – Most defence AI systems are built using parts from many different places; open-source software, pre-trained models, cloud tools, and external data. If just one of these parts is compromised, the whole system could be at risk. Supply chain partners need to prove where each part came from, sign and verify them, and show that the build process can be repeated in a secure way. Without this, the MOD cannot be confident the system is safe to use.

- Sensitive data handling – AI needs large amounts of data, but in defence, much of that data is sensitive or classified. If it is handled wrongly, it could leak. Suppliers must keep classified and unclassified data strictly apart, show clear evidence of where all data came from, and use special secure environments (sometimes called confidential computing) when handling high-risk information.

- Autonomy clarity – Not all AI systems are the same, some only help with simple tasks, while others could act on their own under certain rules, and a few may operate fully without human control. Each type requires different safeguards. Too often, projects wait too long to say how much autonomy their system really has. Every supplier should provide an Autonomy Statement of Intent at the start, so MOD knows what level of oversight and security is needed.

- Identity and access – Humans and machines need different ways of proving who they are. For people, the best approach is passkeys and multi-factor authentication (MFA), which make it very hard for attackers to steal logins. For AI agents and systems, stronger tools are required such as hardware-based keys, short-lived tokens that expire quickly, and checks (called remote attestation) to make sure the software has not been tampered with. This separation keeps control and accountability clear.

- Governance and oversight – AI security is not just about technology, it’s also about people and responsibilities. Who sets the rules for how the AI can be used? Who approves any changes to the system? Who can step in and stop the AI if something goes wrong? Without clear answers, projects can end up with confusion and risk. The Defence AI Centre (DAIC) is working to improve this, but suppliers should also set out clear governance and assurance plans from the start.

- Policy fragmentation – There are many useful documents; JSP 936, the Defence AI Strategy, the NCSC’s guidance, the AI Playbook, and MOD’s Industry Security Notices (ISNs). The problem is they are spread across different places, updated at different times, and not always applied consistently. There is no single “AI security certification/assurance method” for defence projects. This means suppliers must pull everything together into their own clear and complete security case.

All of the above can be addressed through adopting a robust Secure by Design approach (ISN 2023/10 and DefStan 05-138 refer) to AI design and development, which will assist with identifying unique security risks associated with AI development or behaviour. Additionally, a SbD approach will manage the through life aspects which, any AI development project will have.

Are The Current Frameworks Sufficient?

While JSP 936 gives Defence a specific AI directive, the frameworks in place are strong but lack structure and cohesion:

- NCSC provides recognised, detailed technical guidance

- DSIT and the Alan Turing Institute provide assurance methods.

- ISNs keep suppliers updated on evolving policy.

- ISO/IEC 42001 offers international governance credibility.

Together, the above set a solid foundation.

However, they are not enough on their own. The lack of a concerted assurance mechanism, indistinct requirement setting and identification and the inconsistent application of assurance across programmes mean suppliers still face uncertainty. Currently industry partners must “stitch together” guidance into a coherent case, which can lead to duplication, gaps, or inconsistent evidence demands.

What Needs to Happen Next

To ensure a safe environment in this AI era, several improvements are, in my opinion, essential:

- Defence AI Assurance Pathway – Develop a tiered assurance scheme that combines JSP 936, NCSC controls, and assurance artefacts into a repeatable, auditable process linked to autonomy level.

- Requirements Specification – A clear set of user requirements (including security) to be communicated in a contractual document to the intended supplier;

- Mandatory autonomy declarations – Require suppliers to state autonomy level and safeguards at project start.

- Deep supply chain attestations – Enforce signed datasets, model Software Bill of Materials (SBOMs), and reproducible builds down to subcontractors.

- Reference designs – MOD should publish ready-made blueprints for areas like cross-domain data use, confidential computing, and human/machine approval steps. This would save suppliers time, avoid mistakes, and ensure security is applied consistently.

Align with standards – Recognise ISO/IEC 42001 and the new British Standards Institution (BSI) AI audit standard (BS ISO/IEC 42006) to streamline external audits and reduce duplication.

Final Thoughts

The UK has made strong progress with the Defence Directive in JSP 936, internationally respected NCSC security guidance, assurance tools from DSIT and the Turing Institute to keep suppliers aligned. However, it is not yet a coherent, auditable assurance pathway. Suppliers still face fragmented documents, inconsistent expectations, and a lack of AI-specific requirements.

For industry partners, the right move is to treat NCSC controls as baseline, declare autonomy early, prove provenance, separate human and machine identity, and formalise governance in Security & Assurance Plans. For MOD, the priority should be to clarify and communicate requirements, consolidate, clarify the certification pathway, and provide reference designs.

AI can provide the UK with real operational advantage. But without trust, resilience, and security, it risks becoming a liability. Trust must be engineered in, across systems and supply chains. In defence, that trust is as critical as the technology itself.